Reid Honan, University of South Australia

In the world of higher education, the first thing every student asks about is the assessment for the course. Often, learning the actual content of the course comes a distant second as a growing number of students seek to merely pass instead of fully utilising this opportunity (that they are paying for) to dig in and learn. I know of students who calculated the exact percentage they were currently sitting on so that they could disengage from the course halfway through because they had effectively passed. In this Academic world well-designed assessment is a necessity as it directly impacts the amount of the learning consumed by these students.

For a long time, the Higher Education sector has utilised a handful of core assessment components including presentations, essays, practical demonstrations and examinations. As class sizes continue to grow and economic pressures to keep costs low continue, a delicate balance is struck between assessing properly and assessing quickly. Most of the current assessment techniques are focused on students generating an artefac (e.g., report, block of code) and the teaching team assessing that artefact with the overall idea that the student would not have been able to generate the artefact without understanding the course concepts. This approach is not a perfect substitute measure of learning. However, it has grown to be the default substitute because until now the only other means of generating the artefact was to get other people to do it for you.

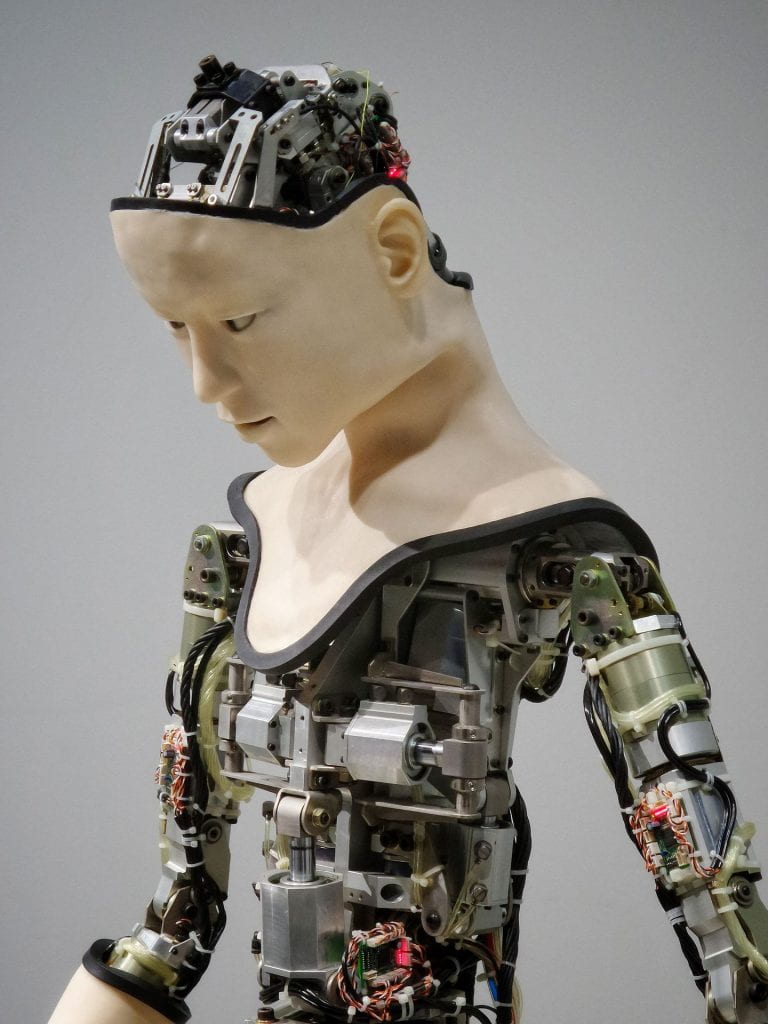

Not since the printing press made written work more easily available has there been such a fundamental shift in the education sector, even the introduction of the computer and internet merely shifted the words from paper to the screen. Generative Artificial Intelligence (AI) has completely flipped the world of education upside down. The ability to have the artefact generated for you without involving other people is revolutionary and has severe implications for future assessment.

What are the Generative AI tools floating around?

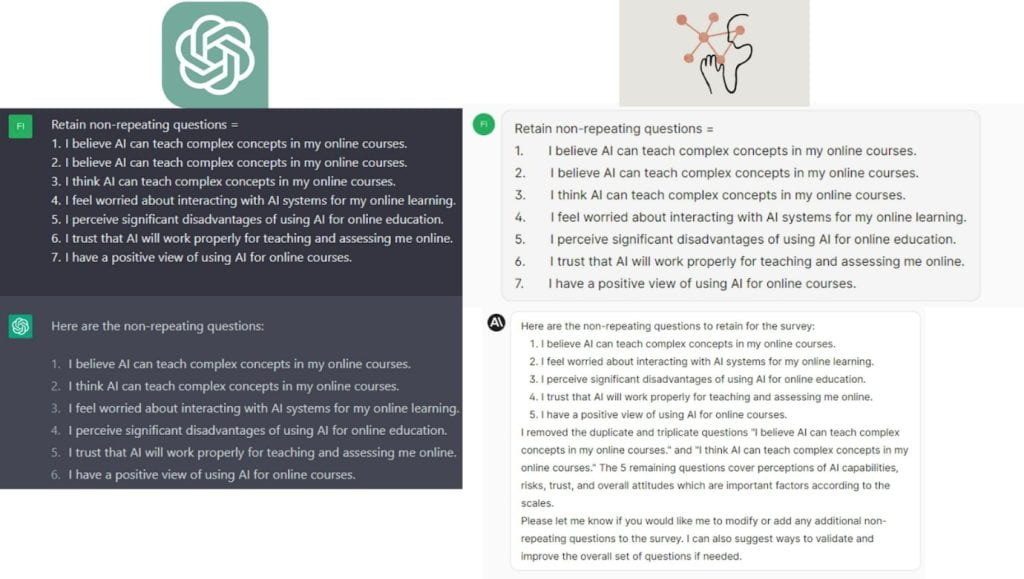

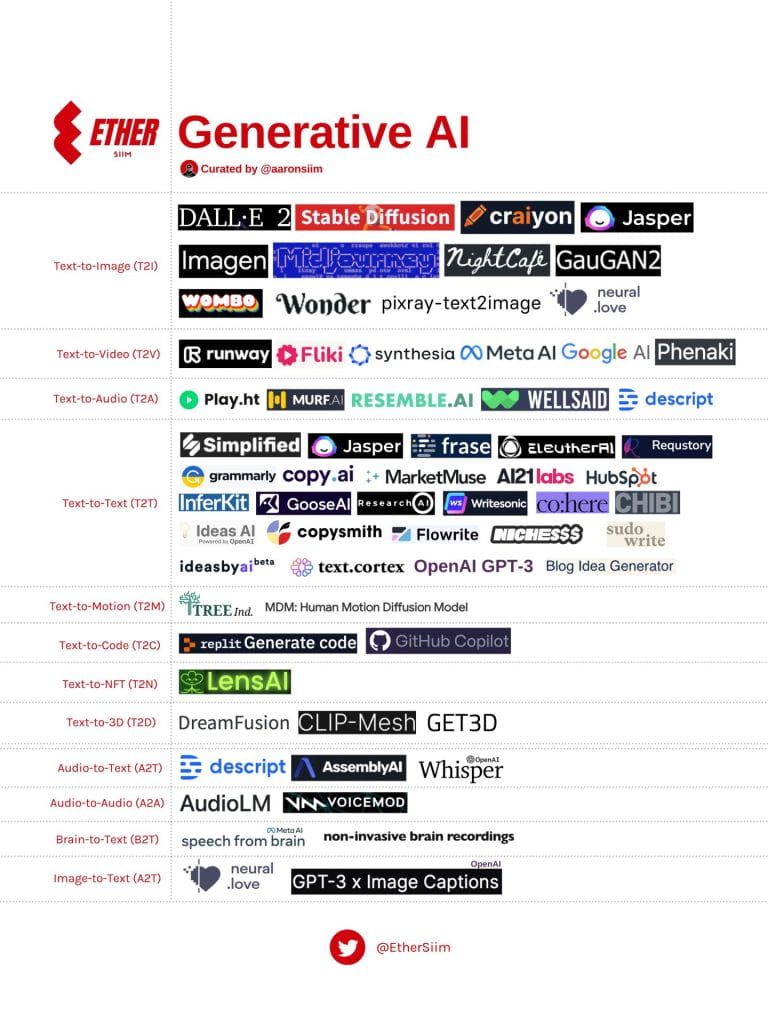

There are an ever-increasing number of Generative AI tools in use, but a summary can be found in the below image:

For this article we will be focusing on text-to-text and text-to-code tools as these are the most relevant to the author.

How can AI be used to help Students?

Students are reportedly using the tools for the following reasons:

- To summarise content

- To search for answers to questions

- To rewrite already written work

- To expand on dot points

- To complete assessments that the student deems not worthy of their time

- To write emails to academics

The problem is that students are often not reviewing the work or double checking the facts and sources leading to the spread of misinformation. This means that students are not engaging with the learning and are therefore not receiving the intended benefits.

How can AI be used to help Staff?

Generative AI was not only built to help students get out of doing work, but it also has a slew of legitimate use cases as well. Staff can use Generative AI tools to:

- Develop case studies or scenarios for assignments

- Generate feedback on students’ submissions

- Craft explanations of complicated concepts for students

- Write standard emails to students

In general, the staff use cases are based on reducing the amount of administrative work with the goal of giving staff more time with their students. The content generated still needs to be reviewed by the staff member however this is commonly done by default because staff tend to have a better understanding of the technologies place in society.

Have we been here before?

Doomsayers have always spoken out about any change or new technology including computers, calculators, grammar/spellcheckers and even programs like Word or IDE’s (Integrated Development Environment). The arguments are along the lines of ‘technology removes the need for people to know anything, all they need to do is push some buttons’. This overlooks the need for the user to understand how to use the technology, what prompts us and what the output means. All the technology listed above has become commonplace and is used in industry, schools and nobody blinks an eye. Unfortunately, these incremental shifts have required small changes but nothing like the scope and scale of Generative AI.

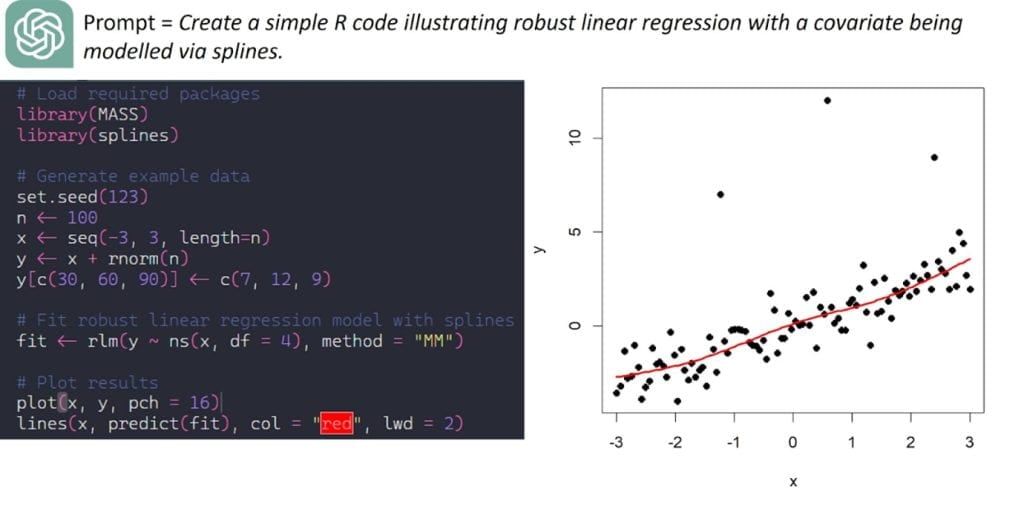

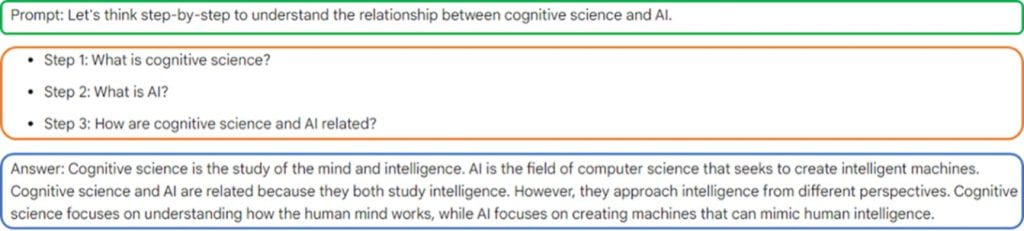

Generative AI has the potential to seemingly automate the entire problem and can allow people to produce content with absolutely no thought. Already people are treating these Generative AI tools as assistants and blindly trusting the output without doing the necessary legwork to confirm their accuracy. E.g.: Fig 2. Spelling error. This generated content is seeping onto the internet, which could cause problems in the future if that text is reused in future AI experiments.

What does this mean for the future?

Universities (and schools) need to actively address the problem of Generative AI and the terrifying answer is that assessments need to change. One of the main scare tactics is asking students to generate a solution and compare it to their own work. Tasks like this are meant to scare students and show them that the tools are inherently unreliable and should therefore be avoided. While a necessary lesson that these tools do have issues, the outcome is flawed as students are often less scared and more intrigued because they now have a direct example of how much AI can do.

Other academics are attempting to fight the oncoming wave by sourcing niche topics that AI struggles with. These tasks then form the core of their assessment. This strategy initially sounds logical, however with the overwhelming number of AI tools, there is no guarantee what tools students will use and the tasks that AI struggles with are constantly shifting.

Students need to understand what these tools can and cannot do but they also need to know how to use them. Students need exposure to these tools so that they understand the dangers, and this cannot come from the scare tactic assessments described above. To properly address this, assessments need to change to allow students to use these tools while still checking students’ knowledge and understanding. Schools already do this with email, and they are already pivoting to address this problem.

Personally, I am changing my assessment from simply producing the computer code to solve the problem to producing the code (AI tools allowed) and presenting/explaining the solution. Using this approach allows students to experiment with these tools and find their weak points while also necessitating the skills of reviewing and explaining their work. Arthur C. Clarke is quoted as saying “Any teacher who can be replaced by a machine should be” and I would extend that to say, “Any assessment that can be completed by a machine should be”.

Conclusion

Generative AI is already changing the world and has the potential to launch us into a fifth Industrial Revolution, as a number of jobs are now within the realm of automation. Universities need to stop trying to fight or avoid Generative AI and start integrating it into their courses. This is not an easy task and there is no standard approach yet, so each academic has a long trek ahead of them.